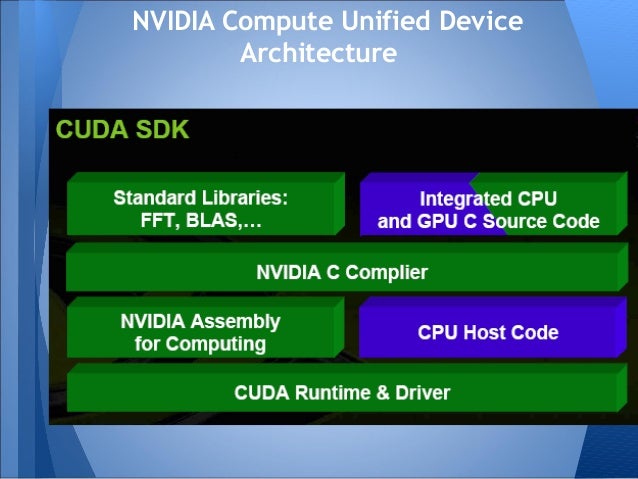

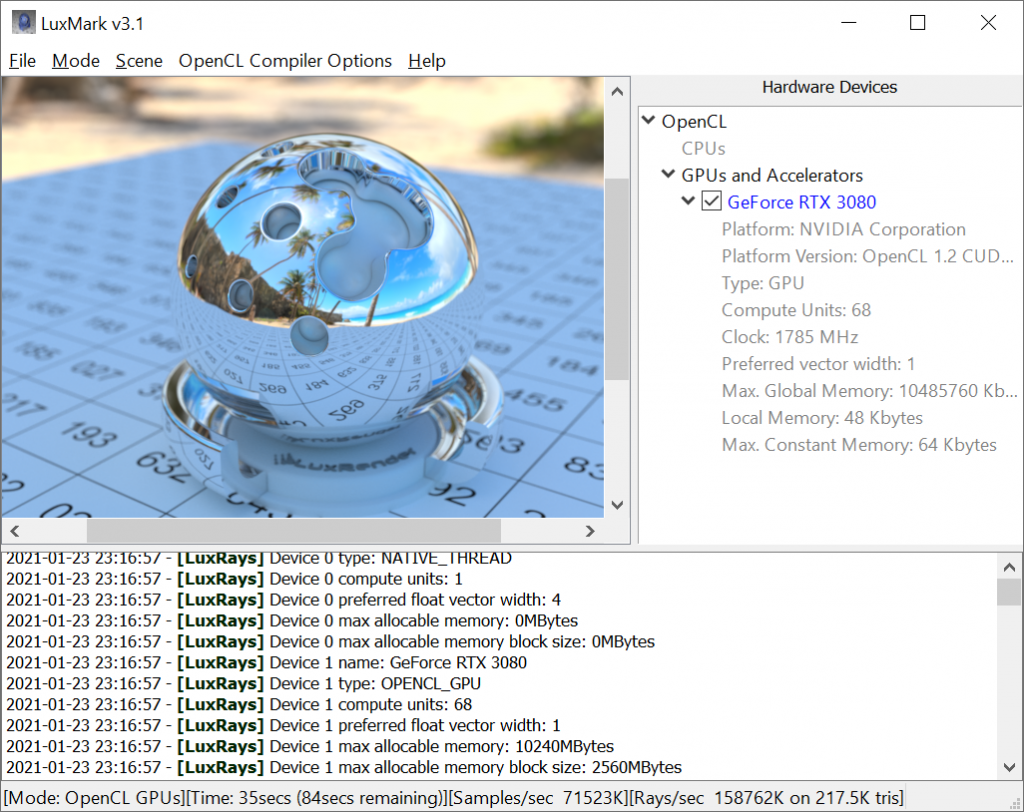

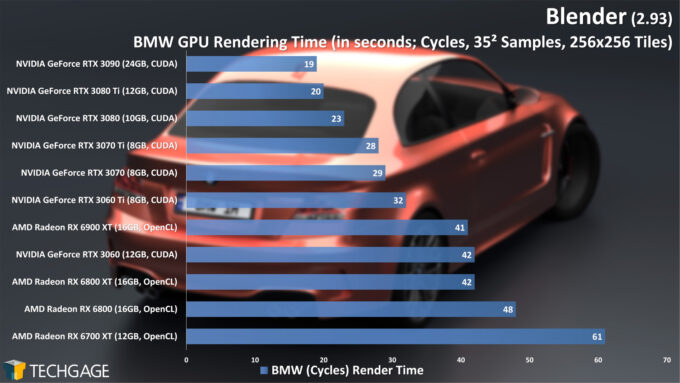

For example, you have to build a separate kernel file at runtime for the specific device(s) (it can be precompiled). When working with OpenCL I felt that it was much closer to what was being run on the device than with CUDA. Where OpenCL using a much closer-to-C language for their kernels and is backed by Apple, AMD, Intel, Nvidia and IBM. CUDA has it's own different version of C, it's on it's specific hardware and it's backed by a single company. Also come back and read our upcoming article about FPGAs + OpenCL, a combination from which we expect a lot.I have worked with both and found that CUDA is quite a bit more abstract, popular and supported than OpenCL. When discussing the game-industry, we will tell more about Microsoft’s DirectX-extension. It will be used by game-developers in the Windows-platform, which just need physics-calculations. What we left out of this discussion is Microsoft’s DirectCompute. We do really appreciate what nVidia already has done for the GPGPU-industry, but we hope they will solely embrace OpenCL for the sake of the long-term market-development. We hope that OpenCL will stay the main-stream GPGPU-API, so the battle will be on hardware/drivers and support of higher-level programming-languages. There can then be a situation that AMD and Intel have to buy Cuda-patents, since OpenCL does not have the support. Possibly nVidia will have better driver-support for Cuda than OpenCL and since Cuda does not work on AMD-cards, the conclusion is that Cuda is faster. The difference between Cuda and OpenCL is comparable with C# and Java – the corporate tactics are also the same. For now a demand to have a high-end videocard of nVidia can be rectified, a card which actually many people have or easily could buy within the budget of their current project. Most developers – helped by the money&support of nVidia – see that there is just little difference between Cuda and OpenCL and in case of a changing market they could translate their programs from one to the other. But what if all Apple-developers create their applications on CUDA?

The only help for them is that Apple demands to have OpenCL on nVidia’s drivers – but for how long? Apple does not want strict dependency on nVidia, since it i.e. Intel saw the light too late and is even postponing their High-end GPU, the Larrabee. You have to know, AMD has faster graphics-cards for lower prices than nVidia at the moment so based on that, they could become the winners on GPGPU (if that was to only thing to do).

nVidia does give a choice, by also having implemented OpenCL in its drivers – it does just not have big pages on how-to-learn-OpenCL.ĪMD – having spent their money on buying ATI, could not put this much effort in the “war” and had to go for OpenCL. Furthermore loads of money is put into an expensive webpage with very complete information about GPGPU there is to find. Also sponsoring helps a lot, so the first main-stream oriented book s about GPGPU discussed Cuda in the first place and interesting conferences were always supported by Cuda’s owner. See Cuda’s university courses as an example. The best way to get better acceptance is giving away free information, such as articles and courses. The way nVidia wanted to play the game, was soon very clear : it wanted to market CUDA to be seen as the better alternative for OpenCL. First we will sum up the many ways nVidia markets Cuda and then we discuss the situation. As it went with OpenGL – which was sort of replaced by DirectX to gain market-share for Windows – now nVidia does the same with CUDA. This protection is not provided through an open standard like OpenCL. Please comment, if you think OpenCL doesn’t do the trickĪs was described in the article, it is important for big companies (such as Microsoft and nVidia) to defend their market-share. The following read is not about the technical differences of the 3 APIs, but more about the reason behind why alternate APIs are being kept maintained while OpenCL does the trick. The requested benchmarking is done by the people of Unigine have some results on differences between the three big GPGPU-APIs: part I, part II and part III with some typical results. Please read this article about Microsoft and OpenCL, before reading on. How can I get through the recruitment process?.What does it mean to work at Stream HPC?.Other Mobile & Embedded – Various new languages have emerged.Low Power – Apps, embedded and portable solutions.Low Latency – Small data-sizes, low response times.Intel – XeonPhi Accelerators, Xeon CPUs.HPC – High Level Programming – Directives and C++.AMD – HIPified CUDA for HSA-enabled GPUs.HPC – Explicit Programming – Accelerated applications and simulations.You can click on the logos of the Programming APIs and Hardware Brands. The below technologies we have experience with and can use them to program CPUs, GPUs, FPGAs and DSPs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed